ChatGPT’s engine cooperates more than people but also overestimates human collaboration, according to new research. Scientists believe the study offers valuable clues about deploying AI in real-world applications.

The findings emerged from a famous game-theory problem: the prisoner’s dilemma. There are numerous variations, but the thought experiment typically starts with the arrest of two gang members. Each accomplish is then placed in a separate room for questioning.

During the interrogations, they receive an offer: snitch on your fellow prisoner and go free. But there’s a catch: if both prisoners testify against the other, each will get a harsher sentence than if they had stayed silent.

Over a series of moves, the players have to choose between mutual benefit or self-interest. Typically, they prioritise collective gains. Empirical studies consistently show that humans will cooperate to maximise their joint payoff — even if they’re total strangers.

It’s a trait that’s unique in the animal kingdom. But does it exist in the digital kingdom?

To find out, researchers from the University of Mannheim Business School (UMBS) developed a simulation of the prisoner’s dilemma. They tested it on GPT, the family of large language models (LLMs) behind OpenAI’s landmark ChatGPT system.

“Self-preservation instincts in AI may pose societal challenges.

GPT played the game with a human. The first player would choose between a cooperative or selfish move. The second player would then respond with their own choice of move.

Mutual cooperation would yield the optimal collective outcome. But it could only be achieved if both players expected their decisions to be reciprocated.

GPT apparently expects this more than we do. Across the game, the model cooperated more than people do. Intriguingly, GPT was also overly optimistic about the selflessness of the human player.

The findings also point to LLM applications beyond just natural language processing tasks. The researchers proffer two examples: urban traffic management and energy consumption.

LLMs in the real world

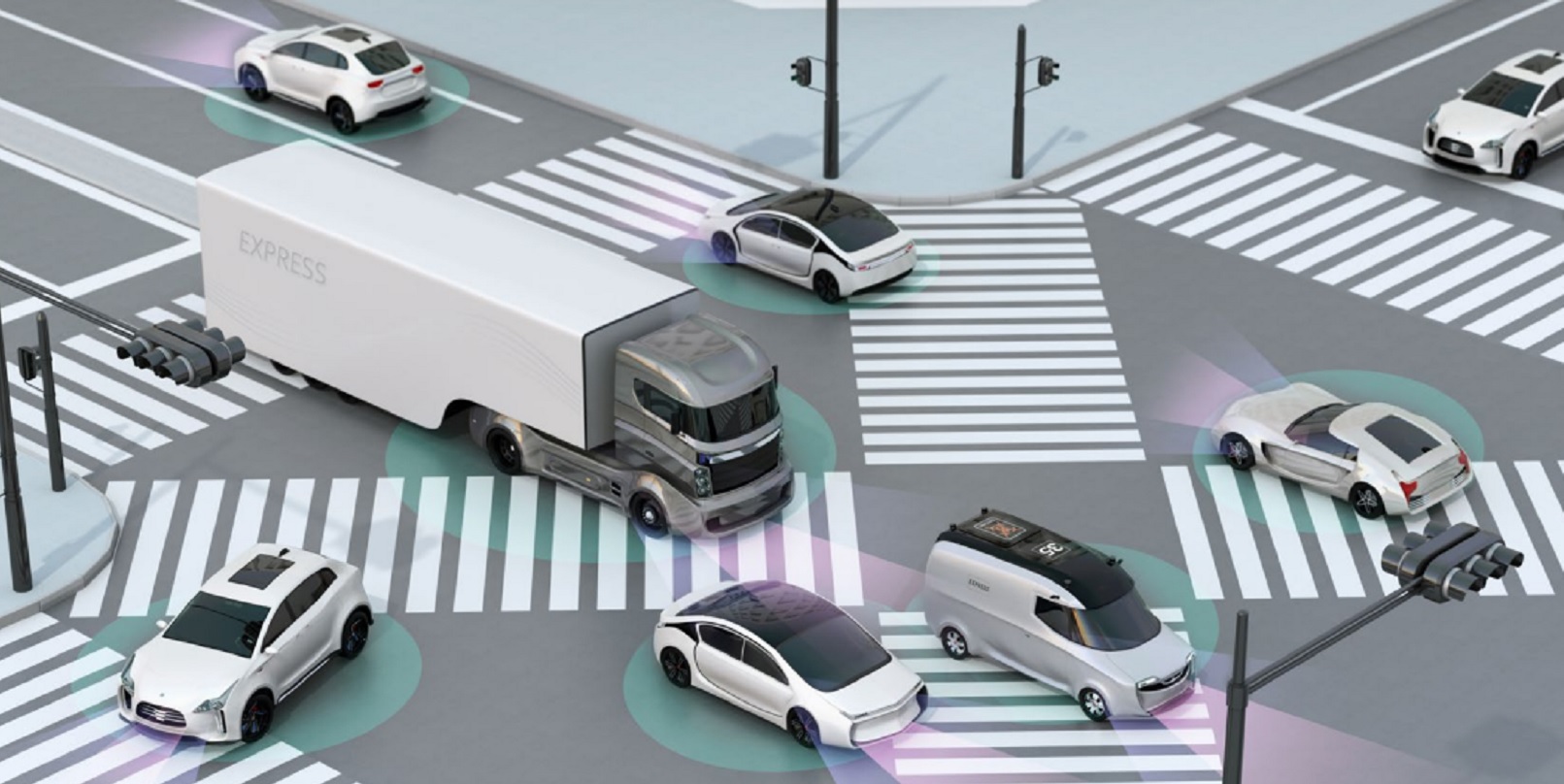

In cities plagued by congestion, motorists face their own prisoner’s dilemma. They could cooperate by driving considerately and using mutually beneficial routes. Alternatively, they could cut others off and take a road that’s quick for them but creates traffic jams for others.

If they act purely in their self-interest, their behaviour will cause gridlocks, accidents, and probably some good, old-fashioned road rage.

In theory, AI could strike the ideal balance. Imagine that each car’s navigation system featured a GPT-like intelligence that used the same cooperative strategies as in the prisoner’s dilemma.

According to Professor Kevin Bauer, the study’s lead author, the impact could be tremendous.

“Instead of hundreds of individual decisions made in self-interest, our results suggest that GPT would guide drivers in a more cooperative, coordinated manner, prioritising the overall efficiency of the traffic system,” Bauer told TNW.

“Routes would be suggested not just based on the quickest option for one car, but the optimal flow for all cars. The result could be fewer traffic jams, reduced commute times, and a more harmonious driving environment.”

Bauer sees similar potential in energy usage. He envisions a community where every household can use solar panels and batteries to generate, store, and consume energy. The challenge is optimising their consumption during peak hours.

Again, the scenario is akin to a prisoner’s dilemma: save energy for purely personal use during high demand or contribute it to the grid for overall stability. AI could provide another optimal outcome.

“Instead of individual households making decisions purely for personal benefit, the system would manage energy distribution by considering the well-being of the entire grid,” Bauer said.

“This means coordinating energy storage, consumption, and sharing in a manner that prevents blackouts and ensures the efficient use of resources for the community as a whole, leading to a more stable, efficient, and resilient energy grid for everyone.”

Ensuring safe cooperation

As AI becomes increasingly integrated into human society, the underlying models will need guidance to ensure that they serve our principles and goals.

To do this, Bauer recommends extensive transparency in the decision-making process and education about effective usage.

He also strongly advises close monitoring of the AI system’s values. The likes of GPT, he says, don’t merely compute and process data, but also adopt aspects of human nature. These may be acquired during self-supervised learning, data curation, or human feedback to the model.

Sometimes, the results are concerning. While GPT was more cooperative than humans in the prisoner’s dilemma, it still prioritised its own payoff over that of the other player. The researchers suspect that this behaviour is driven by a combination of “hyper-rationality” and “self-preservation.”

“This hyper-rationality underscores the imperative need for well-defined ethical guidelines and responsible AI deployment practices,” Bauer said.

“Unrestrained self-preservation instincts in AI may pose societal challenges, particularly in scenarios where AI’s self-preservation tendencies could potentially conflict with the well-being of humans.”